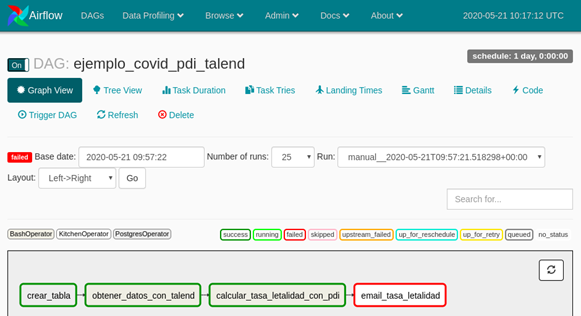

Green means everything went well, red means that the task has failed, and yellow means the task didn't run yet and is up to retry. Then if you go to the "Tree View" you can see all the runs for this DAG. from airflow import DAGįrom _operator import BashOperatorīash_command = 'YOUR-LOCAL-PATH/airflow-tutorial/pdf_to_text.sh Let's create the first_dag.py script to understand how all of this fits together. Since this is your first time using Airflow, in this tutorial we'll trigger the DAG manually and not use the scheduler yet. When you run a workflow it creates a DAG Run, an object representing an instantiation of the DAG in time. In this tutorial you'll only use the BashOperator to run the scripts. There are BashOperators (to execute bash commands), PythonOperators (to call Python functions), MySqlOperators (to execute SQL commands) and so on. A DAG is a Python script that has the collection of all the tasks organized to reflect their relationships and dependencies, as stated here. With Airflow you specify your workflow in a DAG (Directed Acyclic Graph). Now go ahead and open to access the Airflow UI. Then open another terminal window and run the server: $ source. $ airflow initdb #start the database Airflow uses $ airflow version # check if everything is ok You'll create a virtual environment and run these commands to do install everything: $ python3 -m venv .env

In a production setting we usually run it on a dedicated server, but here we'll run it locally to understand how it works. You can schedule jobs to run automatically, so besides the server you'll also need to run the scheduler. To use Airflow we run a web server and then access the UI through the browser. Ok, now that you have your workflow it's time to automate it using Airflow. In this article we're not dealing with the best practices on how to create workflows, but if you want to learn more about it I highly recommend this talk by the creator of Airflow himself: Functional Data Engineering - A Set of Best Practices. You can see that the file metadata.csv was created. Save this in a file named extract_metadata.py and run it with python3 extrac_metadata.py. You'll then save this data into a .csv file. With regex you can do it with this pattern: (\d:\d\d)-(\d:\d\d) (.*\n). You can extract the data by using a pattern that captures the hours and what happens immediately after that and before a line break. The goal is to extract what happens in each hour of the meeting. Now that you have the txt file it's time to create the regex rule to extrat the data. Script to extract the metadata and save it to a. pdf_to_text.sh pdf_filename to create the .txt file. Save this in a file named pdf_to_text.sh, then run chmod +x pdf_to_text.sh and finally run. Tesseract " $PDF_FILENAME.png" " $PDF_FILENAME" This is the script to do all that: #!/bin/bashĬonvert -density 600 " $PDF_FILENAME" " $PDF_FILENAME.png" To extract the metadata you'll use Python and regular expressions. These tasks will be defined in a Bash script. pdf page to a .png file and then use tesseract to convert the image to a. To run the first task you'll use the ImageMagick tool to convert the.

Extract the desired metadata from the text file and save it to a.These are the main tasks to achieve that: In this tutorial you will extract data from a. To see how this works we'll first create a workflow and run it manually, then we'll see how to automate it using Airflow. This means that you'll still have to design and break down your workflow into Bash and/or Python scripts. One important concept is that you'll use Airflow only to automate and manage the tasks. Before airflow: how to prepare your workflow This tutorial was built using Ubuntu 20.04 with ImageMagick, tesseract and Python3 installed. To follow along I'm assuming you already know how to create and run Bash and Python scripts. Apache Airflow is a popular open-source management workflow platform and in this article you'll learn how to use it to automate your first workflow. Data extraction pipelines might be hard to build and manage, so it's a good idea to use a tool that can help you with these tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed